Dear Epoll: Are You Truly Born of Ignorance?

So there I was, minding my own business, catching up on the archive episodes of the amazing BSD Now podcast, when esteemed diet coke drinker Bryan Cantrill appeared as a feature. In what was a part-hilarious, part-ruthless discussion, Bryan yells at disks erm Linux, specifically calling out Epoll for a solid ten minutes straight.

That got me curious: what was the actual design discussion around Epoll, and is the hatred of Epoll actually deserved? Well, dear reader, I couldn’t find a good answer on the topic. So like any good internet citizen, I decided to scratch my own itch and delve into the annals of history to get to the bottom of a Linux feature written almost 30 years ago. Get your popcorn ready!

Making Web Servers Faster

The year is 1999. In just under three years, the percentage of home internet usage in the U.S. will have quadrupled. With a such a dramatic increase in traffic, code paths related to networking became evermore load bearing. It no longer made sense for a web server to sequentially handle thousands of web clients synchronously.

We needed a better way - a more concurrent way - of managing these connections. For these workloads, the only robust choice was typically to use select (and later poll), which allows the server to ask the kernel, “hey, from these thousands of open connections, which of them have I/O ready?”. The server then responds diligently with the subset that are ready.

There’s only one problem though: these syscalls are stateless. With thousands of active connections (and hence thousands of file descriptors), every call to poll involves scanning the entire interest list on every call, even though most calls are expected to turn up only a couple fds as ready. This “needle in a haystack” problem was becoming an active bottleneck to throughput.

Contemporary users of Linux know it as a stable, robust kernel deployed on billions of machines worldwide. But in 1997 it was more of a “cesspool of experimentation”, to put it mildly.

Some experiments were certainly more DOA than others (cough AIO cough). Other experiments, like real-time signals, had more optimistic horizons at the time, with some even proposing I/O models based around it. Sufficed to say, these ideas never really panned out, but the interest was there, and it was strong.

Many a forum post and mailing list have been opened on this topic, but I’d like to leave the reader with a 2001 USENIX paper that goes through the topic in good detail

So nothing was sticking to the wall, and dammit we need to serve those cat videos! How am I supposed to know when I/O is ready for consumption without killing my performance?

This was the environment in which Linux contributor and IBM engineer Davide had started developing Epoll, presenting a first draft publicly on July 1st, 2001. Initially being exposed as a device, /dev/epoll, it was later refactored to get its own syscalls before being mailed out on October 28th, 2002.

The author would like a bit of appreciation for the effort to locate this thread, especially since (a) epoll predates git, (b) the epoll(7) manpage incorrectly lists the kernel release version 2.5.44 when it was actually released in 2.5.45, and (c) the querying abilities of lkml.org are pretty lackluster.

Right from the outset, all the documentation provided - including their informational web page - make Epoll’s design goals abundantly clear:

- Be fast. Faster than any other solution already in the kernel, and

- Have a minimal-invasive patch set, especially in the hot paths within

//fs/pipe.c

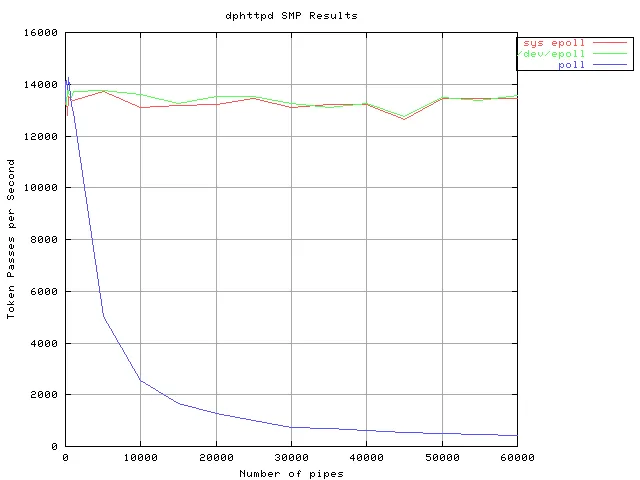

Much to Epoll’s credit, it does largely succeed in the goals it sought out to achieve. The graph clearly shows that while poll’s performance exponentially decayed with the number of number of pipes, Epoll remained consistent.

Much to my surprise, in one of the very first responses to the thread, Martin (a new character here) asks how Epoll compares to kqueue. Martin evens makes the case for standardizing around a common interface.

So why, oh why dear reader, did we not get kqueue for Linux: License concerns? Criticism of the interface? Performance issues? Well, from the horse’s mouth, the answer might not be that deep at all. No one bothered to port the code to Linux, and Epoll already had a patch set that worked.

But as I’ve hinted, the mailing thread gets much deeper, and the breakdown of communication much more entertaining. Shall we go on?

The Mailing List Giveth, the Mailing List Taketh Away

Bert, a new character in our story, is quick to note how lovely the interface is, especially the ‘edge’ nature of it all. He even provides a clean code snippet as a demo:

for(;;) {

nfds = sys_epoll_wait(kdpfd, &pfds, -1);

for(n = 0; n < nfds; ++n) {

if (pfds[n].fd == s) {

client = accept(s, (struct sockaddr*)&local, &addrlen);

sys_epoll_ctl(kdpfd, EP_CTL_ADD, client, POLLIN);

}

else

do_use_fd(pfds[n].fd);

}

}What you’re looking at is the skeleton of an asynchronous event loop, and it should go something like this:

-

sys_epoll_wait()blocks until I/O becomes ready. -

On wakeup, consume all events notified to you by the kernel.

-

If the event occurred on the socket connection, it means a new client is ready to be accepted, so accept the client and register interest in them to Epoll.

-

Otherwise, it’s a client event, so

do_use_fd()on the client.

Clear as mud, right? Not exactly, because this code has a bug in it. It’s subtle, but it’s critical to this story we understand why this snippet is wrong.

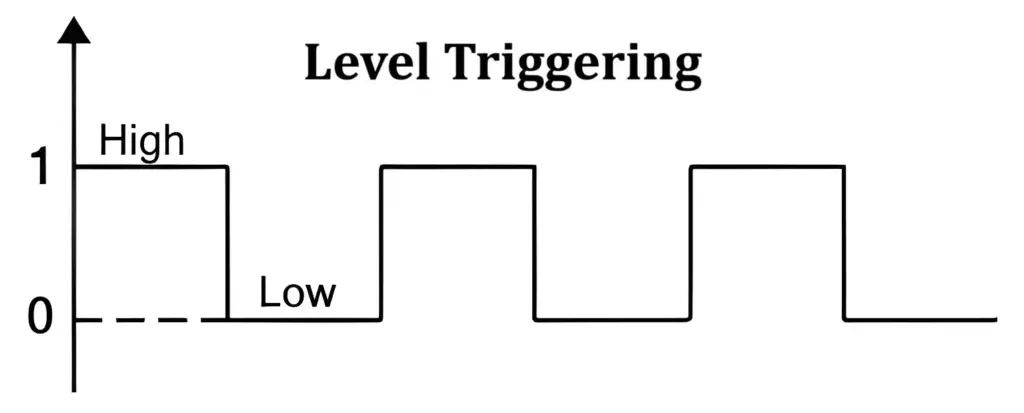

When designing an event notification system like Epoll you have two main semantic models you can choose from: edge-triggered semantics and level-triggered semantics. These names are actually borrowed from electrical engineers describing signals in a circuit. In level-triggered circuits, the circuit is considered “active” as long as the underlying signal remains in the high (1) state, and only becomes inactive when the signal drops down to the low (0) state.

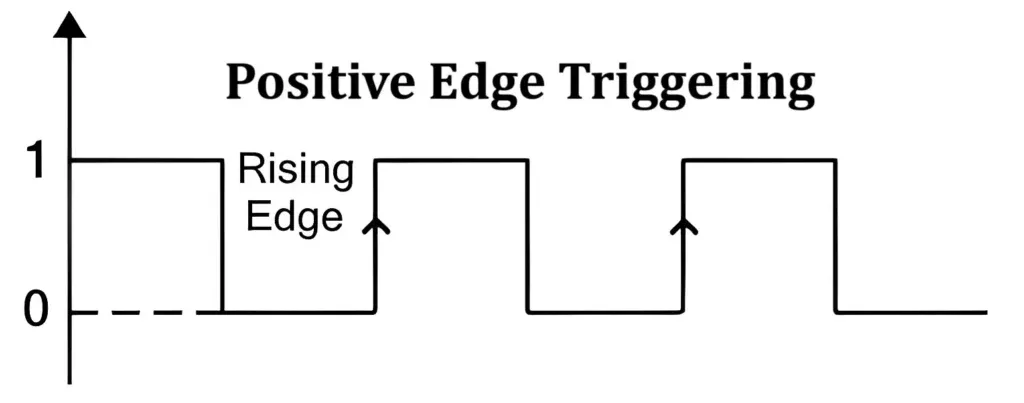

Edge triggered circuits, in comparison, only activate when the underlying signal transitions between states. Once they’ve completed activating, they decompose back to the inactive state, even if the underlying signal remains high.

Most event systems - all of which predate Epoll - chose to go with level-triggered semantics: POSIX poll, FreeBSD’s Kqueue, Solaris’ EventPorts and so on. Epoll, on the other hand, chose to go with edge-triggered semantics. Why?

Most event systems (all of which predate Epoll) operate with level-triggered semantics: poll from POSIX; KQueue from FreeBSD; EventPorts from Solaris; etc. But this is Linux! We value raw perfomance above all else (putting aside that Epoll never actually profiled the difference between the two)! So Epoll shipped with edge-triggered semantics instead.

And this is why Bert’s code is subtly wrong. The newly-accepted client connection may have I/O ready (i.e. the signal is already in the raised state) before the next call to sys_epoll_wait. In that scenario, Epoll doesn’t treat the client connection being ready as an event, and the blocking syscall suddenly stalls the entire thread indefinitely.

A corrected version would always make sure that connections were always fully drained of I/O before calling sys_epoll_wait again:

for(;;) {

nfds = sys_epoll_wait(kdpfd, &pfds, -1);

for(n = 0; n < nfds; ++n) {

c = pfds[n].fd;

if (c == s) {

client = accept(s, (struct sockaddr*)&local, &addrlen);

sys_epoll_ctl(kdpfd, EP_CTL_ADD, client, POLLIN);

c = client;

}

do_use_fd(c); // <-- no longer gated by `else`

}

}Now, I don’t know about you, but if I’ve just designed a new feature, and a kernel engineering colleague of mine immediately foot-guns himself 5 minutes into using it, I’d go back to the drawing board. John, a new character in our story, swoops in to say exactly this, quote:

As you have amply demonstrated, the current epoll API is error prone.

Davide doesn’t handle the criticism very welll, and Martin (the guy who mentioned kqueue) calls him out for not taking the feedback well (which he very much ought to, in my mind).

Mentioning the kqueue paper, putting smiley faces in his emails, and being level headed and emotionally intelligent… Are we sure Martin isn’t some BSD double-operative?

John and Davide exchange some pleasantries, which is basically business as usual for this mailing list. But something was bugging me, because in the midst of all this mudslinging, something at the back of my mind was bugging me: I’ve seen this code snippet before. It felt familiar.

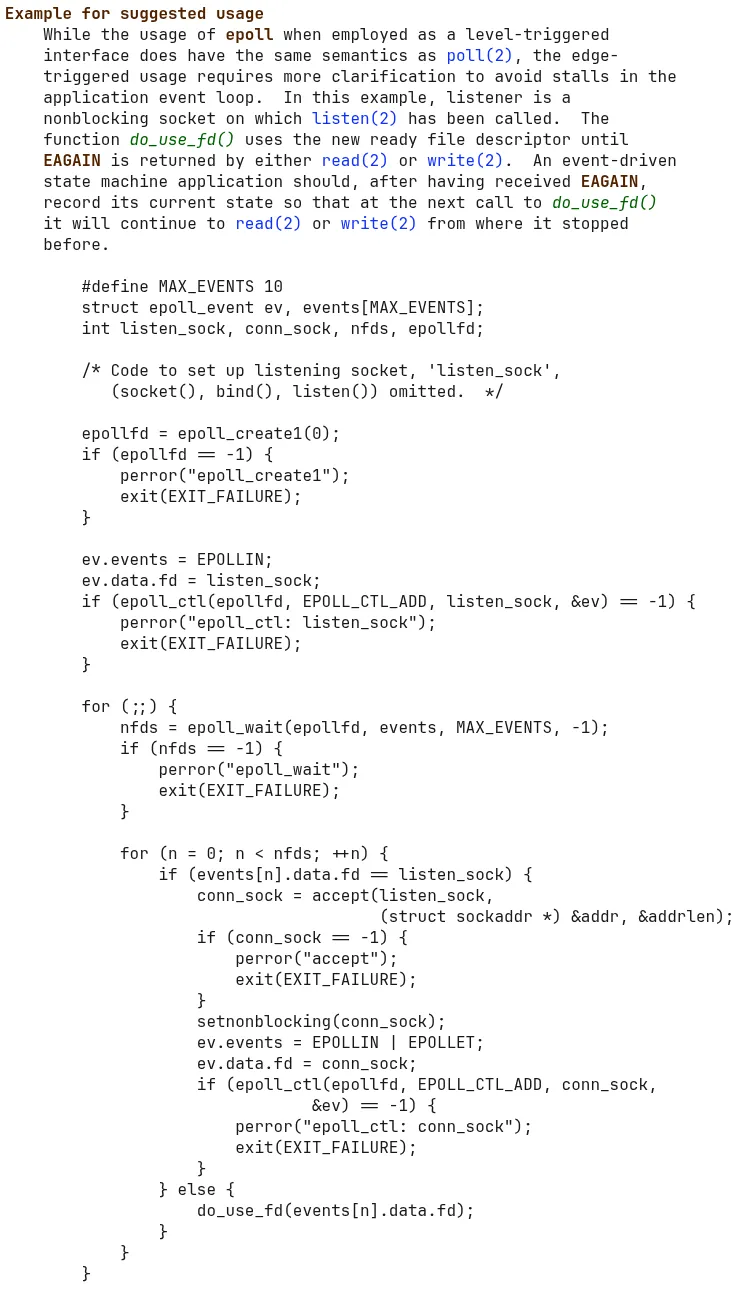

Sure enough, after some digging, I realized why. If you go to Epoll’s manpage, you’ll find a handy section explaining the perils of edge-triggered semantics. They even provide a friendly code snippet to demonstrate what proper usage of the API looks like:

Well, well, well. I guess Martin and John may have been onto something, because if even the Epoll manpage couldn’t get this code right, why should we expect the users to? And as it turns out, despite the author very adamantly disagreeing with idea initially, Linux had to quietly add a check to the internal ep_insert function for this exact condition, just as John was suggesting:

/* If the file is already "ready" we drop it inside the ready list */

if (revents && !ep_is_linked(epi)) {

list_add_tail(&epi->rdllink, &ep->rdllist);

ep_pm_stay_awake(epi);

/* Notify waiting tasks that events are available */

if (waitqueue_active(&ep->wq))

wake_up(&ep->wq);

if (waitqueue_active(&ep->poll_wait))

pwake++;

}So to Answer the Title Question…

I came into this thinking I would find a severe lack of feedback being the root cause. That Epoll was rubber stamped and LGTM’d into the kernel simply because no one had the time nor the standing to criticize it.

Instead, what I found was that every long-term flaw of Epoll was pointed out ahead of its release, and yet it released anyways. It released with edge-triggered semantics on the laurels of dogmatic performance at all costs, despite it being clearly more confusing to users (or even quantifying the performance benefit over level-triggered semantics). It launched without EPOLLONESHOT and EPOLLEXCLUSIVE, which made it essentially useless in multi-threaded programs. Deeper design woes, like associating events to the underlying struct file* instead of the file descriptor, have no chance of being fixed: they’re too ingrained to change.

And yet, despite all that, Epoll quietly powers the majority of all internet traffic through its use in event-driven systems like Node JS and Nginx.

Back to the question, in accordance with Betteridge’s law of headlines, “ignorance” may be inaccurate. Another word comes to mind instead: stubbornness. Years after, in 2007, you could still find Davide in the mailing list defending his controversial design choices.

And for all that, Epoll must be satisfied forever being known as “good enough” rather than “great.”

If You Liked this, You Should Also See…

-

Dan Kegel’s original C10K article, which frames the growing pains of the dot com boom as a fun challenge to concurrently serve 10,000 active client connections on a server, and what’s needed to get there.

-

Marek’s two-part blog: Epoll is fundamentally broken, parts 1 and 2.

-

Bryan Cantrill gave a talk in 2014 about LX-branded zones and how Illumos was able to natively run Linux binaries on Unix. The entire thing is great, and porting Epoll was discussed around minute 45.

-

There’s an LWN article that was posted the day Epoll was launched, and it has some interesting user discussion on it.